|

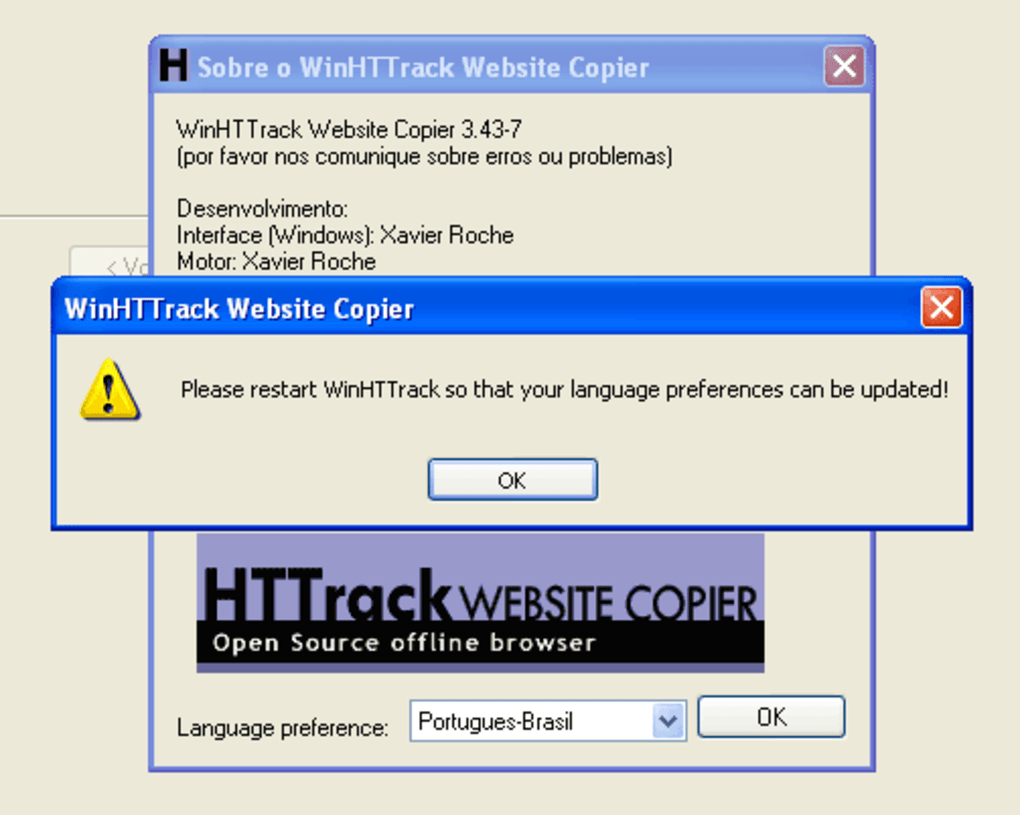

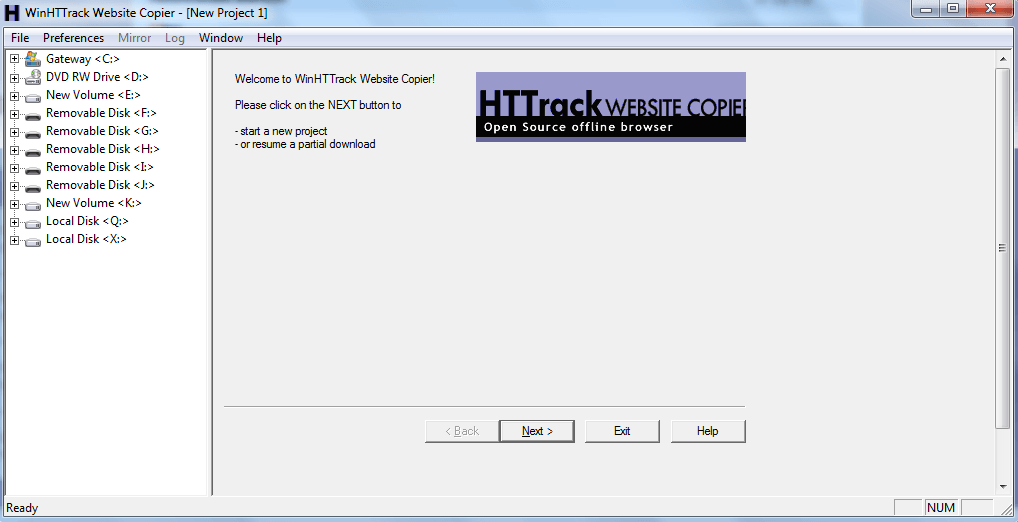

Mirror single article to article-y directory using Mozilla/5.0 (X11 Ubuntu Linux x86_64 rv:63.0) Gecko/20100101 Firefox/63.0 user agent, referer and pl preferred language. $ httrack -mirror -robots=0 -stay-on-same-domain -keep-links=0 -path -max-rate=409600 -connection-per-second=4 -sockets=8 -quiet -* +/* So, Ive tried following: Enter project name and URL: Set option can go up and down both. Consider I want to cover this page with all inside links (you can see like: problem 6.11, problem 6.10 from that page). Mirror the whole website to directory using filters to limit downloaded files using 8 concurrent connections, 400KB/s transfer rate limit and maximum 4 connections per second. I am using Httrack for copying/mirroring a website and facing one problem. Update website located in article-x directory. $ httrack -mirror -robots=0 -stay-on-same-domain -keep-links=0 -path -quiet -* +/*Ĭontinue download located in directory. Mirror the whole website to directory using filters to limit downloaded files. $ httrack -mirror -ext-depth=0 -depth=1 -near -stay-on-same-address -keep-links=0 -path article-x -quiet $ httrack -versionĭownload single article to article-x directory using near parameter to also get files linked inside downloaded page. Processing triggers for man-db (2.7.6.1-2). Processing triggers for libc-bin (2.24-11+deb9u3). Selecting previously unselected package httrack.

27239 files and directories currently installed.) HTTrack Website Copier lets you easily store and view your favorite Web sites offline. Selecting previously unselected package libhttrack2. The following NEW packages will be installed:Ġ upgraded, 2 newly installed, 0 to remove and 0 not upgraded.Īfter this operation, 798 kB of additional disk space will be used. The following additional packages will be installed:

Copy website for offline browsing using HTTrack website copier.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed